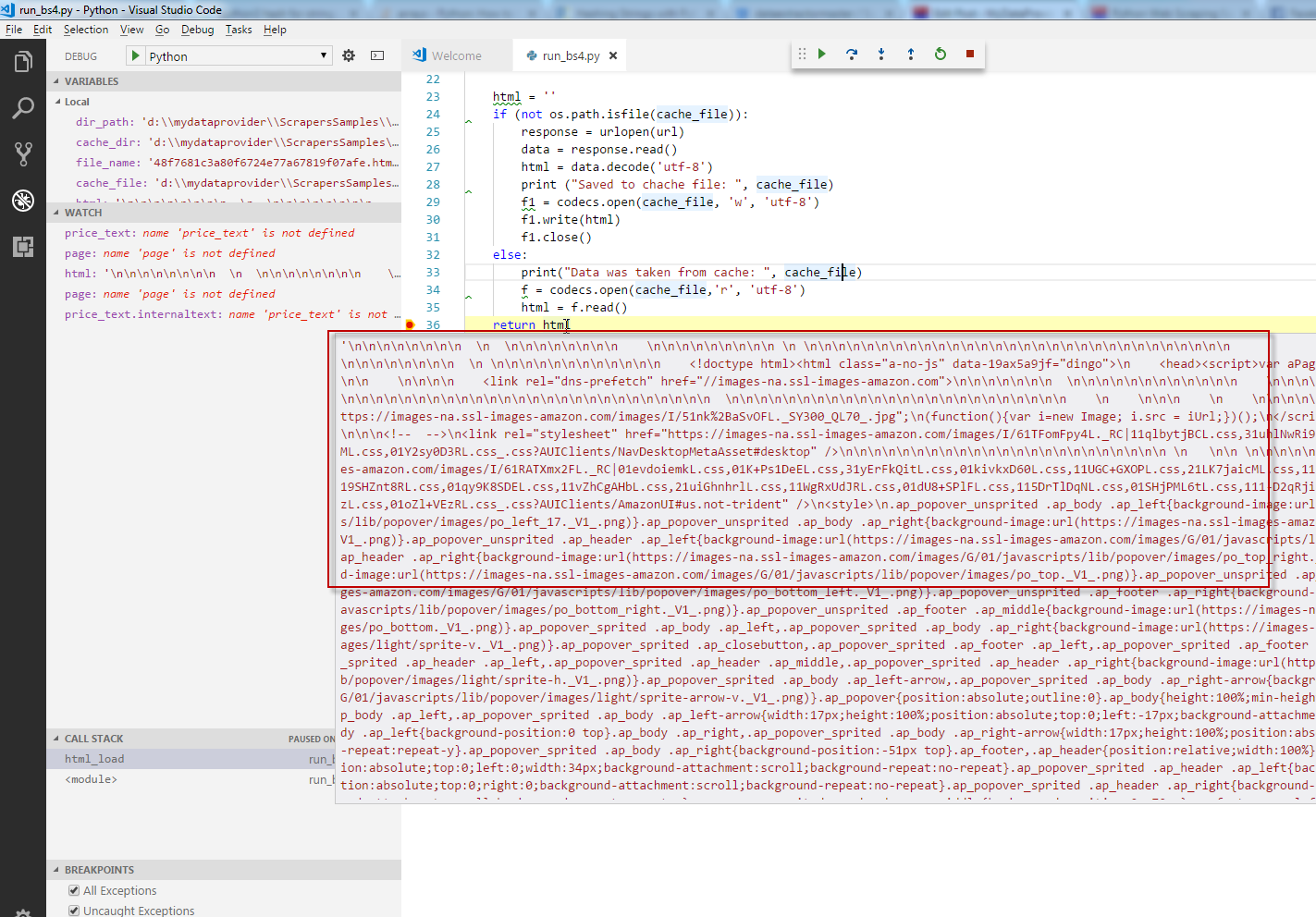

That may be the text, the code snippets, the headings, or anything else. How to proceed from here depends on what information we want to scrape from the page. We now have a BeautifulSoup object, which represents the string as a nested data structure. > soup = BeautifulSoup(page_text, 'html.parser') Let's import the library and start making some soup:

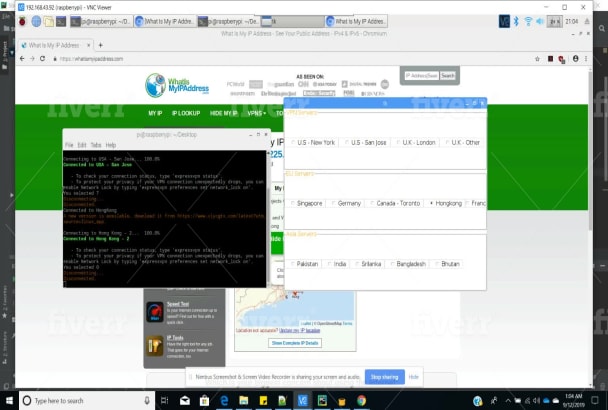

We can use this library to parse the HTML-formatted string from the data we have retrieved and extract the information we want. Trying different parsing strategies is very easy, and we do not need to worry about document encodings. The library also provides functions for navigating, searching, and modifying the parsed data. This library only parses the data, which is why we need another library to get the data as we have seen in the previous section. Beautiful Soupīeautiful Soup is a user-friendly library with functionality for parsing HTML and XML documents automatically into a tree structure. From here, we may try to manually extract the required information, but that is messy and error-prone. This returns the contents of the whole page as a string. To get the contents of the web page, we simply need to do the following: For more information on this library, including how to send a customized request, take a look at the documentation and user guide. Hopefully, we don't see the dreaded 404! It is possible to customize the get() request with some optional arguments to modify the response from the server. To see if the request was successful, check the status with r.status_code. The object r is the response from the host server and contains the results of the get() request. To import the library and get the page just requires a few lines of code: The requests library is used for making HTTP requests to a URL.Īs an example, let's say we're interested in getting an article from the blog. The first step in the process is to get data from the web page we want to scrape. If you're new to Python and need some learning material, take a look at this track to give you a background in data analysis. This article is aimed at people with a little more experience in Python and data analysis. The best approach is very use-case dependent. It is the first step for many interesting projects! However, there is no fixed technology or methodology for Python web scraping. This may be text, numerical data, or even images. Web scraping is the process of extracting information from the source code of a web page. You'll find the tools and the inspiration to kickstart your next web scraping project. Looking for Python website scrapers? In this article, we will get you started with some helpful libraries for Python web scraping. Through the rest of the pages of this example dataset, or rewriting thisĪpplication to use threads for improved speed.Here are some useful Python libraries to get you started in web scraping. Some more cool ideas to think about are modifying this script to iterate Using Python or we can save it to a file and share it with the world. Now we can do all sorts of cool stuff with it: we can analyze it Buyers : Prices : Ĭongratulations! We have successfully scraped all the data we wanted fromĪ web page using lxml and Requests.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed